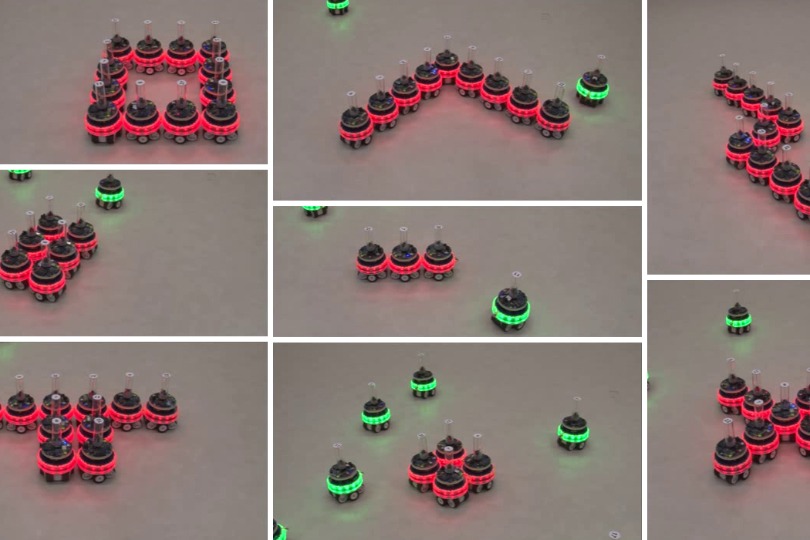

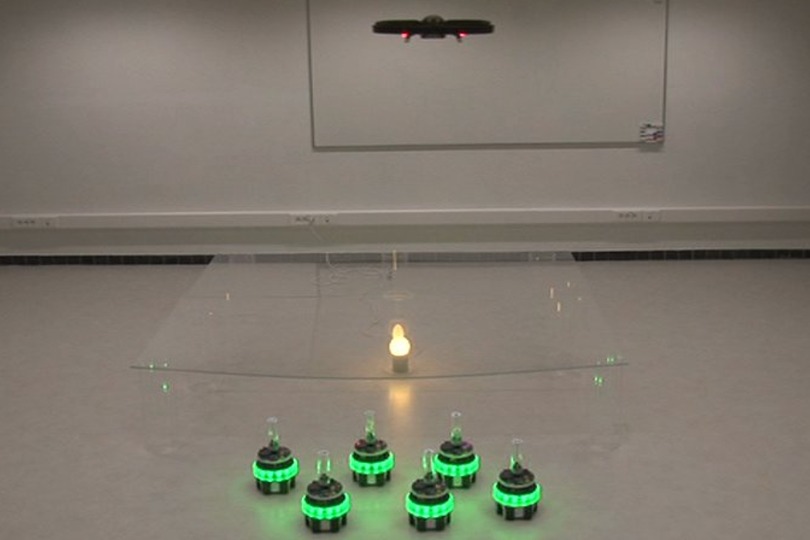

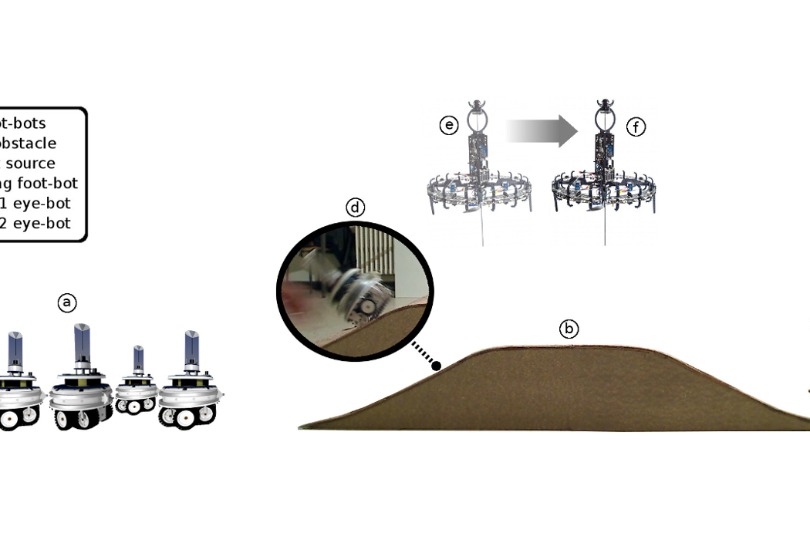

In the research article “Supervised morphogenesis: exploiting morphological flexibility of self-assembling multirobot systems through cooperation with aerial robots”, Nithin and his colleagues from multiple research labs and the Fraunhofer Institute present results of two case studies using two different autonomous aerial platforms and up to six self-assembling autonomous robots. The research is a significant step towards realizing the true potential of self-assembling robots by enabling autonomous morphological adaptation to unknown tasks and environments.

Existing self-assembling robots are often pre-programmed by human operators who precisely define the scale and shape of target morphologies to be formed before deployment. Alternatively, robots rely on specific environmental cues to infer target morphologies. This is primarily because self-assembling robots tend to be relatively simple robotic units. They lack the sensory apparatus to characterize the environment with sufficient accuracy to find a suitable target morphology for a given situation.